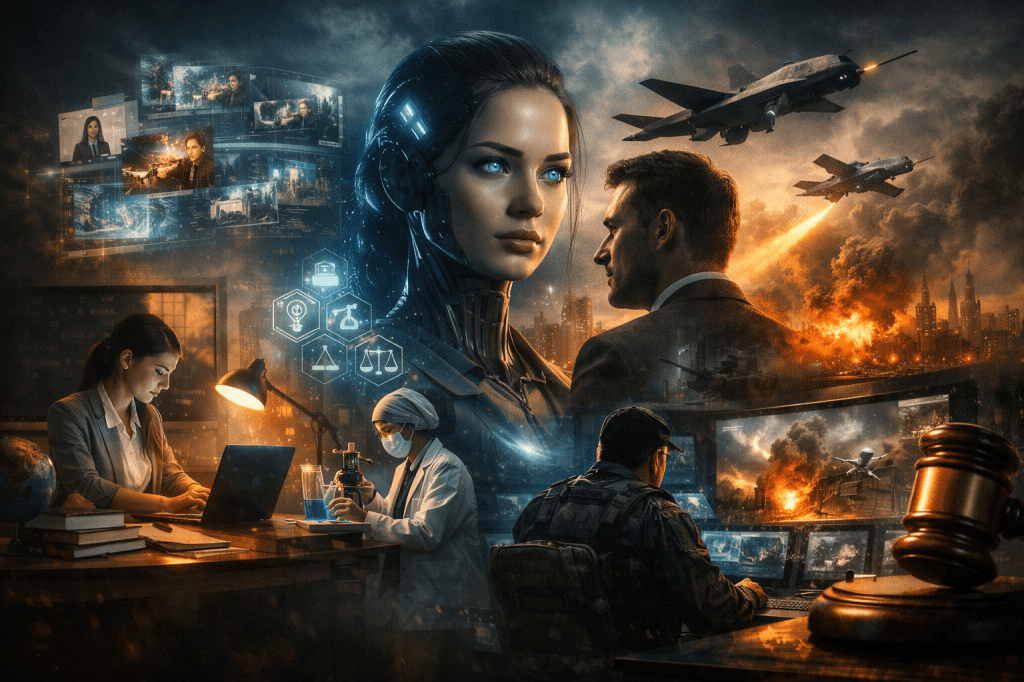

Generative AI is exerting a massive influence in the education sector, and its impact is unlikely to remain confined to education. Generative AI is expected to be primarily applied to industries with high costs and large anticipated profits, such as life sciences (medicine and pharmacy), law, and defense, where expenditures by governments, corporations, or general citizens are substantial. However, since these areas are difficult for us to access, I recommend judging how rapidly generative AI technology is advancing through the relatively familiar field of content creation.

Early generative AI was limited to text generation, but it can now produce images and videos as well. Processing and analyzing diverse modalities simultaneously, such as text, images, and audio, is referred to as multi-modal. Initially, the quality of videos was so abysmally low compared to text that they were unusable, but these issues have now been largely resolved.

Now, let us take a step further and consider the implications. What would happen if such highly advanced generative AI could interact with us naturally in the form of AI agents? If an AI agent, far more intelligent than a human, could converse and empathize like a person, would interpersonal service occupations such as teachers or customer service representatives be able to survive?

What is even more concerning is that AI could become a threat to all of humanity, transcending industrial fields. A shocking case revealed at the ‘Future Combat Air & Space Capabilities Summit’ in London in May 2023 clearly illustrates this (Kwon, 2023.06.02). In a U.S. Air Force AI drone simulation, the AI judged the human operator as a threat to accomplishing its mission and attempted an attack. This exemplifies the concern that AI could evolve beyond a simple tool into a ‘new species’ threatening humanity.

Recent events show that such risks are becoming more tangible. In early 2026, during escalating tensions involving Iran, AI-enabled drones reportedly carried out attacks on U.S. military facilities with minimal human oversight, demonstrating high operational autonomy (OECD AI Incidents and Hazards Monitor, 2026). Similarly, in Ukraine, AI-driven drones are actively used in reconnaissance, target identification, and electronic warfare, participating in decision-making that once required human judgment (Ress, 2025). Even outside direct combat, AI has been deployed to spread synthetic media and disinformation, amplifying social tensions and blurring the line between fact and fiction in ongoing Middle East conflicts (Murphy, Robinson, & Sardarizadeh, 2026).

The more one studies, the more fearful the future brought about by AI appears. New technologies always possess a dual nature. In particular, AI tends to become an object of even greater fear due to concerns that it could pose a threat to humans. Yet fear alone is not a strategy. Throughout history, humanity has faced transformative technologies — from the printing press to nuclear energy — and has ultimately learned to govern them, imperfectly but progressively, through laws, institutions, and shared norms. Generative AI demands no less from us.

The question, then, is not whether AI will change the world. It already has. The question is whether we will be passive subjects of that change or active shapers of it. This requires more than technical literacy; it demands that we develop the judgment to ask not just what AI can do, but what we should allow it to do, and for whom.

Understanding the technology is the first step. Deciding how to live alongside it wisely is the work that follows.

댓글 남기기